Multicore debugging challenges in Zephyr RTOS: Part 2 – Cache coherency

By Bea Ben Ali

Introduction

In part 1 of our three-part blog series, we discussed how to debug and analyze race conditions.

This second installment focuses on how to detect cache coherency issues, which are another common problem in multicore systems with multiple cores that must play well together.

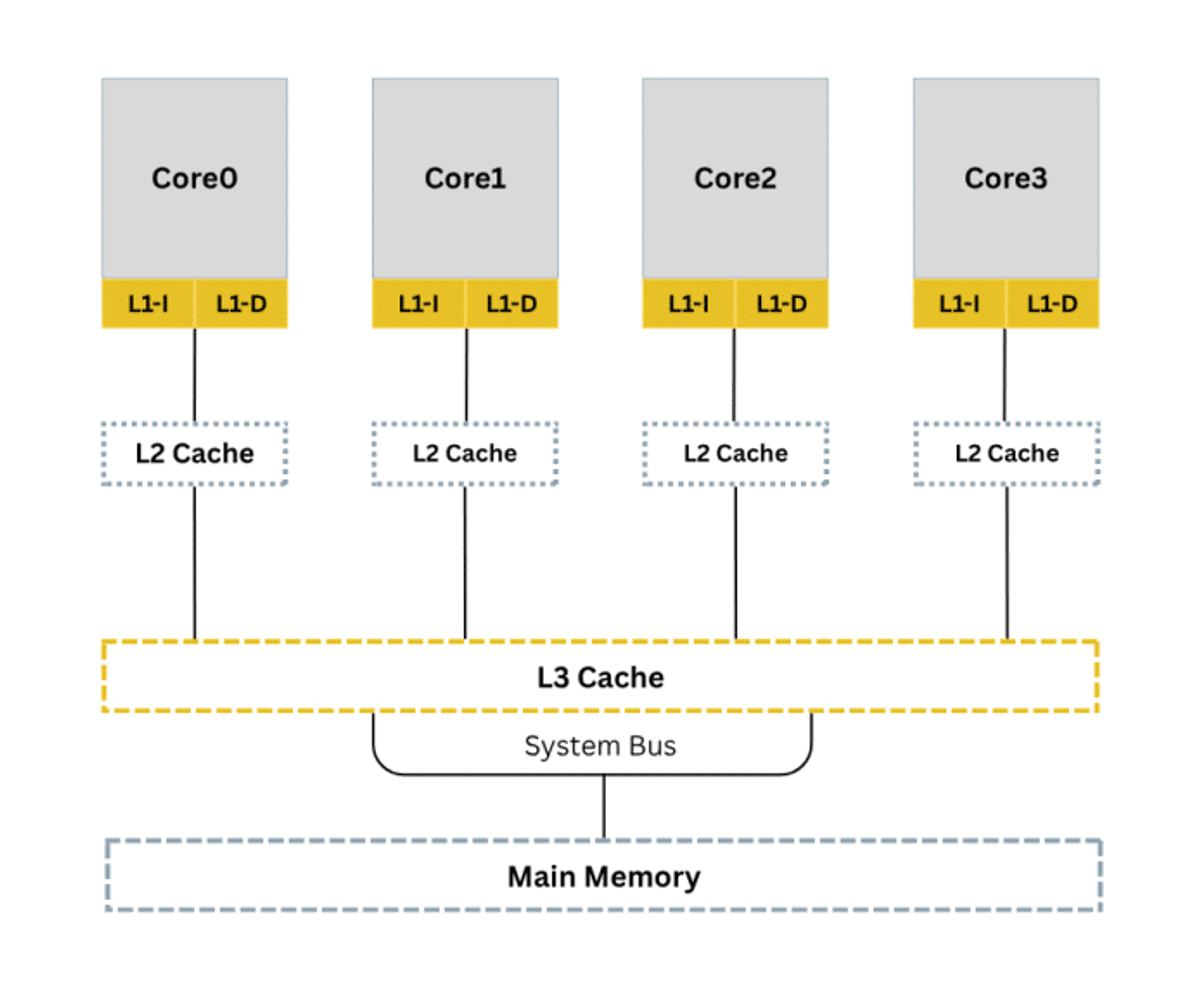

Before we dive in, let’s first review how caches can be organized in a multicore system.

The diagram below shows how cache memory in a quad-core system is typically divided into L1, L2, and L3.

As developers, we choose which level to use based on the specific needs of our data.

L1 is the fastest but smallest, while L3 is largest and shared across multiple cores.

For this blog, we will focus on a dual-core system as we present ideas on how to trace and handle cache coherency problems when each core holds its own cache.

Debugging cache coherency problems

Cache coherency problems occur when several cores each have their own copy of the same data and access it at the wrong time. If one copy is changed, others may still use stale data, causing unpredictable behavior. Traditional debuggers show only main memory, not stale cached values.

Debugging with printf() can sometimes create “Heisenbugs,” where observing the system changes its timing.

Below is an animation showing how values on two cores diverge when cache is not handled:

Video Player

00:00

00:18

Overcoming cache coherency issues on Zephyr RTOS systems with SystemView with Ozone support

As shown in part 1, we must add instrumentation using SystemView to log system events. Initialize SystemView before adding event macros in key sections.

Enable debugging features in Zephyr

As a starting point, let’s configure Zephyr for multi-core debugging and tracing:

CONFIG_MP_MAX_NUM_CPUS=2

Enable the cache-related macro:

CONFIG_CACHE_MANAGEMENT=y

(Editor’s note: Enabling this config means the kernel handles cache coherency in AMP systems. If missing, use the tips below.)

Instrument the code for tracing

The next code snippet uses atomic operations, which are useful for dual-core systems.

SystemView provides SEGGER_SYSVIEW_Printf() and SEGGER_SYSVIEW_PrintfHost() for tracing without printf().

We create two threads running on separate cores. Shared data is in an external file accessible by both cores.

Attempt 1: Inconsistent shared data

main for core 1

#define STACKSIZE 1024

#

SEGGER_SYSVIEW_PrintfHost("Core1: shared_data = %d", shared_data);

#

k_msleep(10);

Code snippet: main for core 0

#define STACKSIZE 1024

#

SEGGER_SYSVIEW_PrintfHost("Core0: shared_data = %d", shared_data);

#

k_msleep(10);

(Editor’s note: shared_data will be declared extern so both cores can access it.)

Attempt 2: Consistent shared data

main for core 0

#define STACKSIZE 1024

#

SEGGER_SYSVIEW_PrintfHost("Core0: Pre-Increment: %d", atomic_get(&shared_data));

#

atomic_inc(&shared_data);

#

SEGGER_SYSVIEW_PrintfHost("Core0: Post-Increment: %d", atomic_get(&shared_data));

#

k_msleep(10);

Code snippet: main for core 1

#define STACKSIZE 1024

#

SEGGER_SYSVIEW_PrintfHost("Core1: Pre-Increment: %d", atomic_get(&shared_data));

#

atomic_inc(&shared_data);

#

SEGGER_SYSVIEW_PrintfHost("Core1: Post-Increment: %d", atomic_get(&shared_data));

#

k_msleep(10);

Spinlock Code snippet:

static atomic_t lock;

(Editor’s note: Zephyr also provides k_spinlock APIs:

DMB Code snippet:

void core1_thread(void *p1, void *p2, void *p3) {

#

SEGGER_SYSVIEW_PrintfHost("Core1: Pre-Increment: %d", atomic_get(&shared_data));

#

atomic_inc(&shared_data);

#

SEGGER_SYSVIEW_PrintfHost("Core1: Post-Increment: %d", atomic_get(&shared_data));

#

k_msleep(10);

Debugging workflow tips

Step 1: Use SystemView to detect race conditions.

Step 2: Use Ozone to inspect memory, registers, cache control, and coherency.

Zephyr cache APIs

Zephyr provides cache maintenance APIs such as cache_data_invd_range() and cache_data_flush_range().

These work only on Cortex-M7 because it has an L1 cache.

Up next: Inter-core messaging

In the next part of this series, we’ll discuss inter-core messaging.